Concept

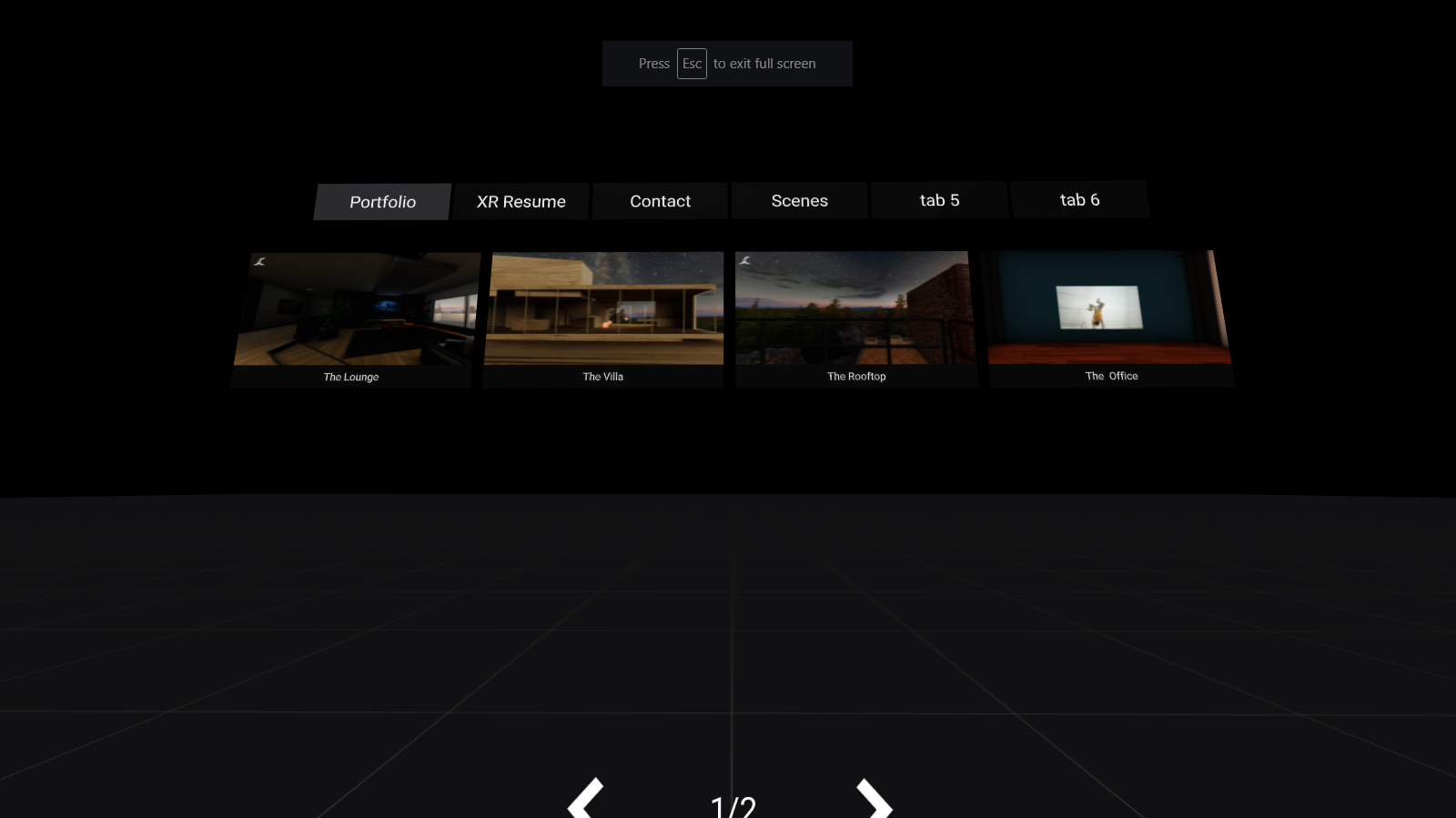

I built a web app that leverages WebXR to display a live video stream as part of a 3D model that exists in both WebAR and WebVR environments.

The WebVR scene allows users to broadcast themselves into a 3D model that they can rotate and move around a virtual scene. The WebAR scene allows users to view the virtual scene in augmented reality where the 3D model and video stream are controlled by the broadcast user.

Design

In this project, the broadcaster will be represented by a 3D model, and we will play the camera stream as a texture as the face of the model.

For this build planning to use 3 frameworks: AFrame because its virtual DOM makes it very clear and easy to understand the structure of the scene; AR.js for its cross-platform WebAR; and Agora.io for the live streaming, because it it makes integrating real-time video simple.

Framework

In our live broadcast web app, we will have two clients (broadcaster/audience), each with their own UI. The broadcaster UI will use AFrame to create the WebVR part experience, while the audience will use AR.js with AFrame to create the WebAR portion of the experience.

Function

When you run the demo on your mobile device, you’ll have to accept camera access and sensor permissions for AR.js. Point your phone at the marker image and you should see the 3D Broadcaster appear.

Next on the Broadcaster Client, use e, r, d, x, c, and z to move the Broadcaster around in the virtual scene, and watch as it’s updated in real-time for everyone in the channel.